Now we'll name the function and specify the queue name it's going to watch: It's going to be a queue trigger that I write in C# so we'll grab that option: The Azure Function kicks off by creating a new one in the portal with some pretty basic details:īut let's instead just select "New Function" and start there: So that's the prerequisite: messages in a queue with each one containing a single IP address NET first because I just want to focus on functions here. If that's an unfamiliar paradigm to you then check out Get started with Azure Queue storage using. My web app is already deciding when an IP is being abusive (and there's parameters around that I won't go into here) and then dropping it into an Azure storage queue. So let's do this: let's use an Azure Function to take abusive IP addresses and submit them to CloudFlare to be blocked. The value proposition of Azure Functions is that they're very small units of code that can be quickly written and deployed then triggered by events. Of course you pay for that too in a pay-per-execution billing model (more on that later), but now it's just a money discussion and not a scaling one.Īzure's interpretation of serverless code is their Functions feature which is still in preview at the time of writing, but this is a perfect use case as it's something non-critical to the actual function of the site so a good place to dip a toe in the water.

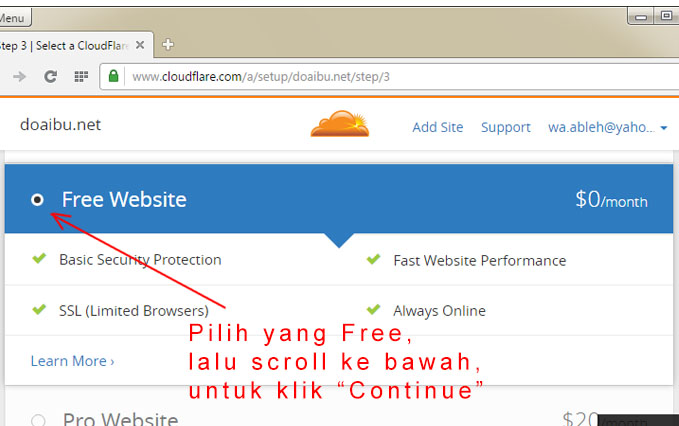

One of the key tenets of a serverless architecture is that when it's provisioned in the way Microsoft has done it here, you just never even think about scaling underlying resources of what sort of load you're generating on the environment, it's just an endless stream of constant service that you consume at will. But they do draw resources from the infrastructure they run on and the hot thing these days is "serverless" which is like, on servers, but you kinda don't know it. I love that they run in your existing website therefore don't cost any more, I love that they deploy along with the site and I love their resiliency. Normally I'd stand up a WebJob to do this and I've written at length about my love of these. And whilst there's a heap of IP addresses being abusive (refer back to that post), I can programmatically identify them. For example, you can block an IP address outright. One of the great things about having CloudFlare in front of the site is that it opens up options of how to handle traffic upstream of your server, or origin as it's often referred to. This was particularly useful when the traffic went from API abuse to all out attempted DDoS and I'll write more about how I handled that in the future (I'd like to wait until things settle down first). I started routing traffic through CloudFlare at the time of the blog post I mentioned in the opening paragraph. But that's not really fair now, is it? I mean that I should be paying out of my own pocket just to serve 45-something-million HTTP "Too Many Requests" responses to someone who's getting absolutely zero value out of them anyway.

That was unchanged from the week before which also had zero downtime and I achieved that because I just spent money on scale to keep it fast for everyone. In fact, it is zero as my weekly New Relic report from Monday shows (note that "views" doesn't include API hits which is where all the traffic way being directed to): It was only that high because someone also came along and decided to throw an automated scanning tool at it so as far as I'm concerned, downtime was effectively zero. However, in that 24-hour period where 45 million requests were served, the error rate according to New Relic was 0.0011%. That's as measured by CloudFlare and you can see that they passed 97% of the requests on to my site. However, just because there's no point in it doesn't mean that people aren't going to do it anyway as my traffic stats last weekend would attest to: By limiting requests to one per every 1.5 seconds and then returning HTTP 429 in excess of that, the rate limit meant there was no longer any point in hammering away at the service. It was causing sudden ramp ups of traffic that Azure couldn't scale fast enough to meet and was also hitting my hip pocket as I paid for the underlying infrastructure to scale out in response. I wrote recently about how Have I been pwned (HIBP) had an API rate limit introduced and then brought forward which was in part a response to large volumes of requests against the API.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed